What Does It Mean to Orchestrate AI Tasks?

Orchestration is about coordination. It means taking many individual tasks — classification, extraction, reasoning, image generation, tool calls — and running them in the right order, handling failures gracefully, and delivering combined results. Today, most people orchestrate AI tasks manually: open one tool, get a result, paste it into the next tool, check for errors, and repeat.

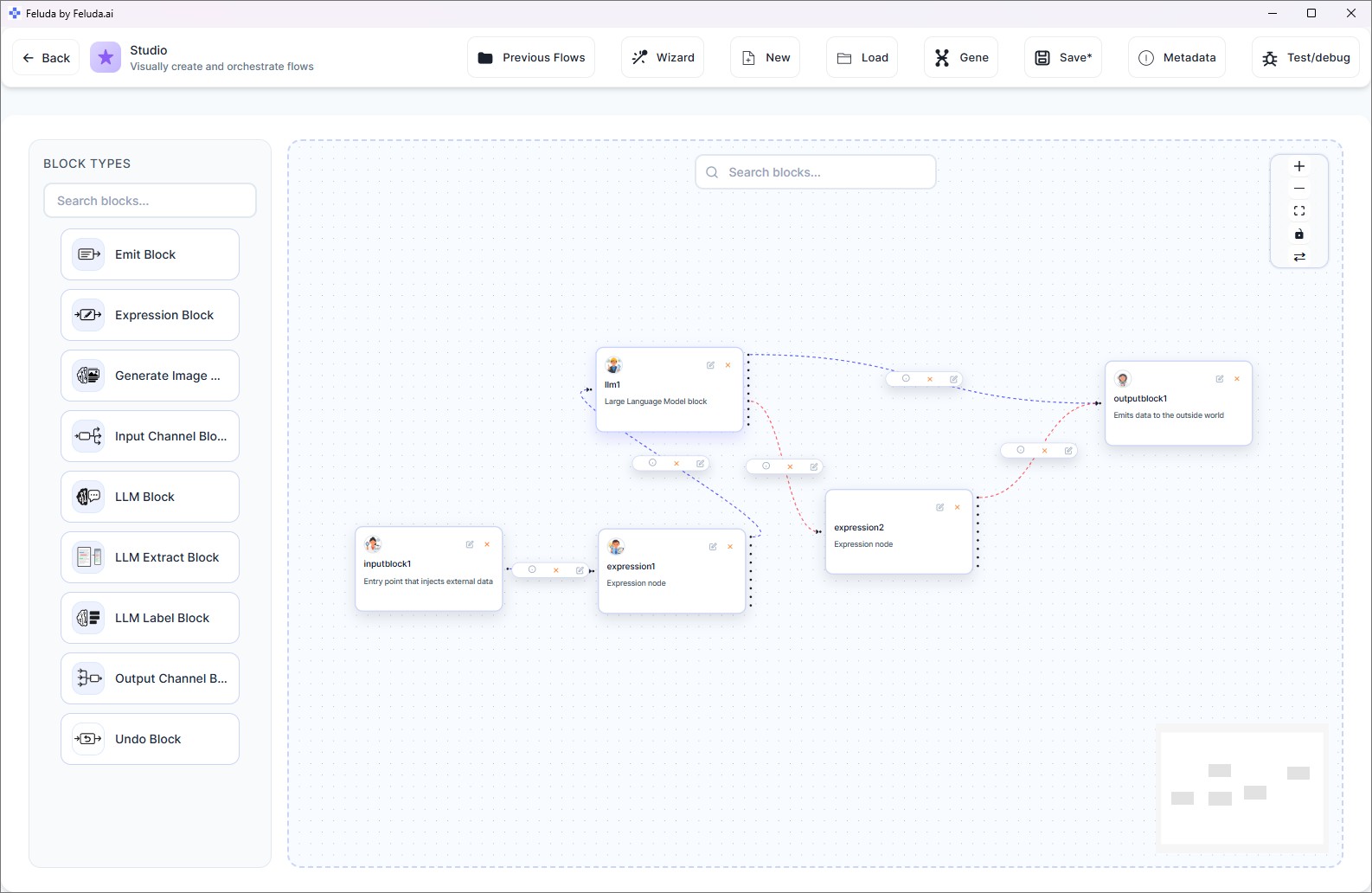

Feluda replaces that manual coordination with a visual canvas. You define the sequence, the parallel paths, the error routes, and the scheduling — and Feluda executes the whole thing for you.

Five Orchestration Capabilities in Feluda

Sequential Execution

Chain blocks in order. Data flows from one step to the next. The output of classification feeds into extraction, which feeds into reasoning, which feeds into the final report. Each step depends on the previous one.

Parallel Branching

Connect one block's output to multiple downstream blocks. They all run simultaneously. Compare three AI providers on the same input, or process the same data through different analysis paths at once.

Conditional Routing

LLM Label blocks classify data and route each category to a different path. "Urgent" items go to one pipeline, "routine" items go to another. The AI decides, the orchestration follows.

Error Routing and Failover

Every AI block exposes typed error outputs: rate limit, timeout, content filter, tool failure. Route each error to a specific recovery path — a different provider, a retry, or a human review step. Your orchestration never crashes.

Scheduled Orchestration

The Schedule Manager runs your orchestrated workflow automatically. Set it to execute hourly, daily, weekly, or on a specific date. The entire coordination happens without your involvement.

Real Orchestration Scenarios

Document Processing Pipeline

Input → PII scan (Expression block) → Classify (billing / legal / technical) → Route each type to a specialised extraction block → Merge results → Generate summary report → Output. Six tasks, one orchestrated pipeline.

Multi-Provider Quality Control

Same input → three parallel LLM blocks (OpenAI, Anthropic, local model) → a fourth block compares all three outputs and selects the best → Output. Automated quality control across providers.

Resilient Report Generation

Input data → LLM reasoning (primary provider) → if rate-limited, failover to secondary provider → if both fail, route to Output with error details → schedule daily at 7 AM. The report always arrives, even when APIs are unstable.

Content Production with Human Review

Brief → Generate draft (LLM) → Classify tone (LLM Label) → if "off-brand," route to Interactive Run for human review → if "on-brand," proceed to image generation → Output full content package.

Frequently Asked Questions

Is there software that can orchestrate multiple AI tasks together?

Yes. Feluda orchestrates multiple AI tasks in a single visual workflow — sequential chains, parallel branches, conditional routing, error failover, and scheduled execution. All on your desktop, no code required.

Can I run AI tasks in parallel?

Yes. Connect one block to multiple downstream blocks and they execute simultaneously. Use this for provider comparison, multi-path analysis, or redundant processing.

How does error handling work in orchestrated workflows?

Every AI block exposes typed error connections. You visually route each error type (rate limit, timeout, content filter, tool failure) to a recovery path — a different provider, a retry block, or a human review step.

Do I need to write code to orchestrate AI tasks?

No. Feluda Studio is a visual drag-and-drop canvas. You define the orchestration by placing blocks and drawing connections. No scripts, no YAML, no framework.

Start Orchestrating Your AI Tasks

Download Feluda for free. Build your first orchestrated AI workflow in minutes.